Four years ago, Noted cofounders Terenze Yuen and Fai Tung came out of an hours-long client meeting with two things: a very lengthy recording and a total lack of enthusiasm for transcribing it. “You can imagine the pain,” Yuen says.

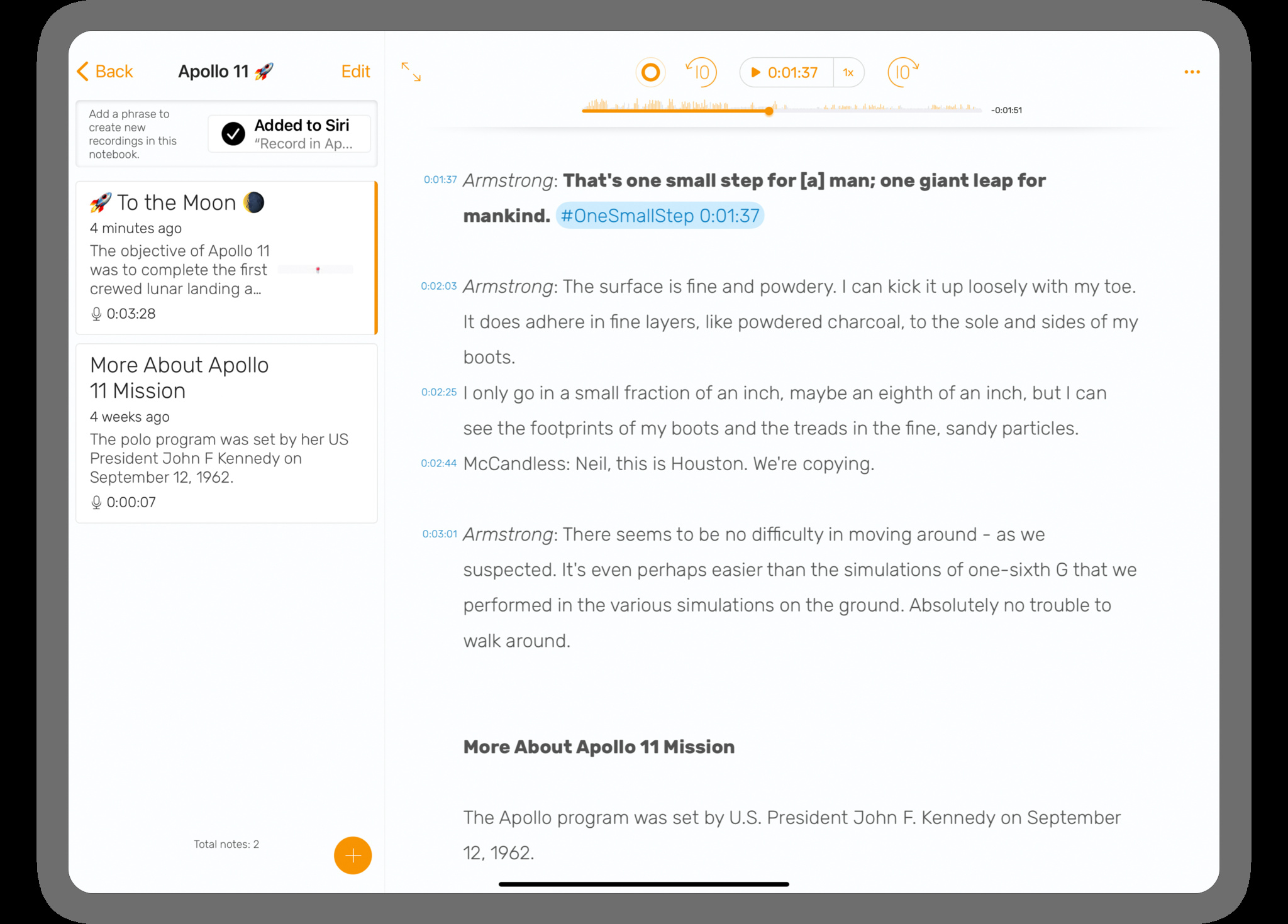

Happily, that pain inspired the pair to create Noted, a recording app that lets you add text annotations and hashtags to your recordings as you’re making them. After you tap the big red button, anything you type — “launch update,” “convoluted calculus formula,” “funniest quote of the interview” — is automatically time-stamped.

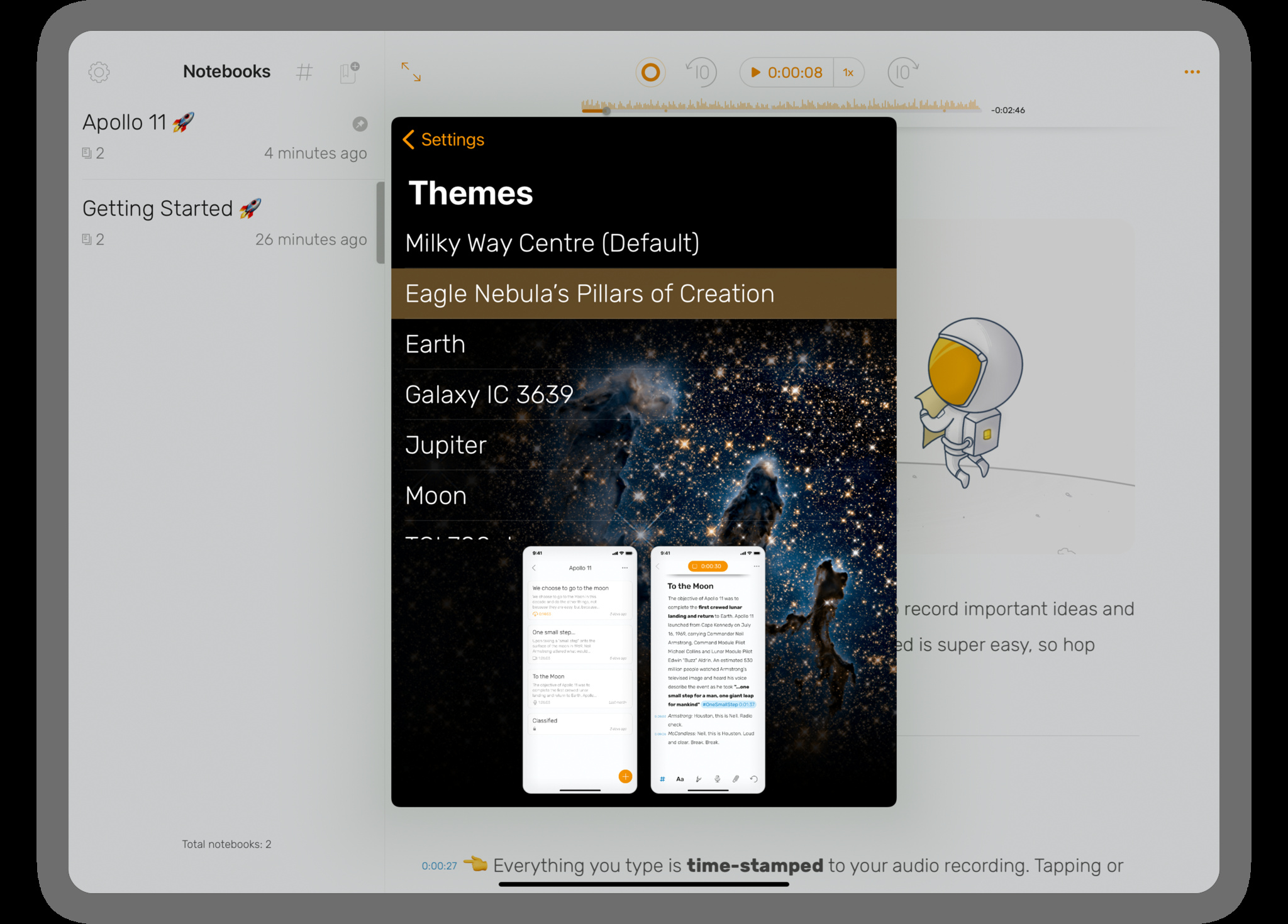

With its mix of audio and text, *Noted* is one of the most powerful note-taking apps in the galaxy.

We caught up with Yuen to talk about his app’s growth, Michelle Obama’s inspiring words at the Apple Worldwide Developers Conference (WWDC), and the challenge of getting artificial intelligence to understand with silence.

You’ve said you and your cofounder were inspired by a very long meeting…

We were! When we came out, he said, “Do you remember the time they mentioned this and that?” I sent him the recording, but neither of us could remember the timeline. We thought, “Why can’t we marry note-taking and audio recording, so when someone asks for that time, you can tap back to a hashtag? That was the core of the idea.

After you launched, where did you see Noted used most?

Classes and meetings. We got a lot of images from users in lecture halls and conferences, including WWDC. Journalists use it all the time; songwriters use it to write lyrics and play them back.

Add time stamps and hashtags to key moments in your recordings.

What surprised you about how people were using Noted?

One thing was hearing from users who are blind. Then, in 2017, we heard Michelle Obama talk at WWDC about imagining someone, somewhere, whom you could lift up with your work. I asked myself, “Are we really building for everyone? Are we doing all that we can?” I realized we had room to improve. We started integrating VoiceOver, then worked with blind people to improve that. A teacher at a school in New Zealand reached out to help her kids take notes during class. This is something that touches our hearts.

Noted can automatically skip over long silences in your recordings. How did you create that feature?

We got a lot of suggestions from people who were in lectures all day. Their recordings were three or four hours long because they forgot to stop recording when there was a break, and they’d have to scrub through those 30 minutes or whatever it was. So we put our thinking caps on and looked into machine learning. We struggled with that! But when Core ML released, one of our guys sat down and went through thousands of hours of audio to train it. We knew it was doable.

What advice would you give to developers who are just starting out?

You can’t do everything yourself. One person isn’t a symphony — you need everyone to play. When your app gets out the door, that’s the start of the whole thing, not the end. You need people in customer support. You need people in design. You need marketing people, you need engineers. You need different types of people to do distinct things.

Powered by WPeMatico